dirname, fname )): yield tokenize ( line ) sentences = SentenceGenerator ( data_dir ) model = Word2Vec ( sentences, ** params ) weights = model. dirname = dirname def _iter_ ( self ): for fname in os. Create Embeddings import os import json import numpy as np from gensim.models import Word2Vec def create_embeddings ( data_dir, embeddings_path, vocab_path, ** params ): class SentenceGenerator ( object ): def _init_ ( self, dirname ): self. In contrast, lemmatization involves getting the root of each word, which can be helpful but is more computationally expensive (enough so that you would want to preprocess your text rather than do it on-the-fly).

We want to tokenize each string to get a list of words, usually by making everything lowercase and splitting along the spaces. In lexical analysis, tokenization is the process of breaking a stream of text up into words, phrases, symbols, or other meaningful elements called tokens. Sudo pip install -ignore-installed gensim Tokenizing from gensim.utils import simple_preprocess tokenize = lambda x : simple_preprocess ( x ) works if you don’t already have Keras or Gensim. Discussion on the Google Group: This topic was hashed out about a year ago on the Keras Google Group, and has since migrated to its own Slack channel.Github Issue: Another reference, with some relevant code.

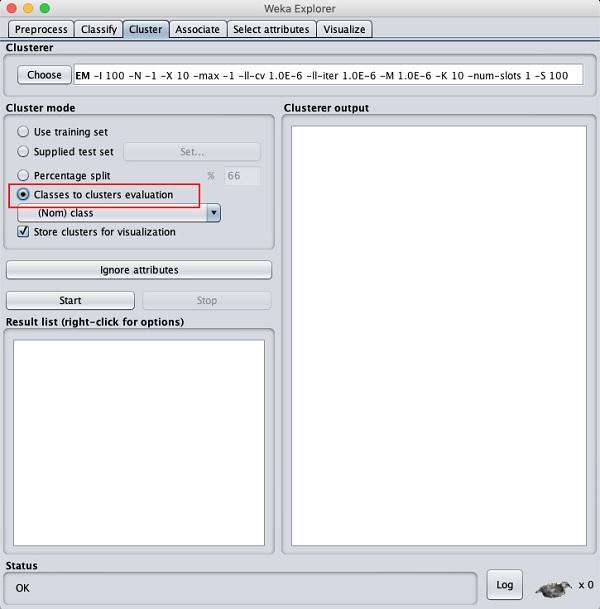

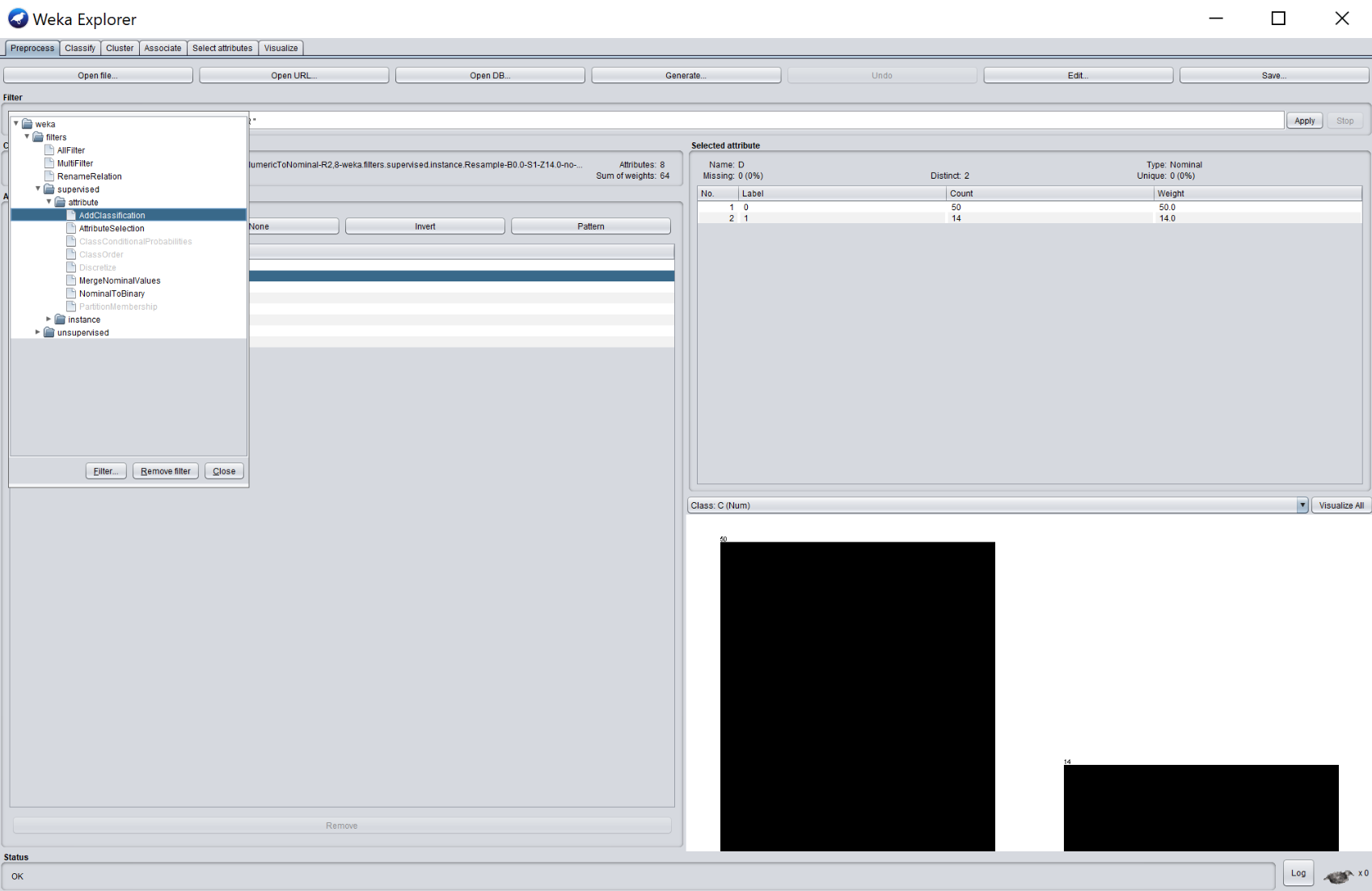

#HOW TO INSTALL WEKA CLUSTERERS IN R HOW TO#

Keras Blog: Francois Chollet wrote a whole post about this exact topic a few weeks ago, so that’s the authoritative source on how to do this.This topic has been covered elsewhere by other people, but I thought another code example and explanation might be useful. This will be a quick post about using Gensim’s Word2Vec embeddings in Keras.